Thus x in C describes the same array section as X(j:j+k-1) in Fortran. In Fortran, the quantity following the assignment specifies the upper bound of the array section being assigned. Naturally, this must be the same for each array or pointer in the assignment.

Beginning with the Intel Compilers Version 19, this compiler option is included in the default option, -O2. If the application does not use any OpenMP threading APIs, but only SIMD constructs, /Qopenmp-simd (-qopenmp-simd) should be preferred.

To enable support for the SIMD features of OpenMP in the Intel compilers, applications should be compiled with either /Qopenmp or /Qopenmp-simd (Windows*) or with either -qopenmp or -qopenmp-simd (Linux* or MacOS*).

Just like in OpenMP, the programmer is responsible for telling the compiler about reduction variables and private variables, to avoid race conditions.īeginning with the OpenMP 4.0 standard directives are available as SIMD constructs that support explicit vectorization. The Intel Fortran and C++ compilers have supported the OpenMP SIMD constructs since the release of the Intel Compilers Version 15. The method is analogous to threading with OpenMP, where a directive may require the compiler to thread a loop. Explicit Vector Programming is an attempt to remove that uncertainty: the programmer, using his knowledge of the application, can instruct the compiler to vectorize a loop. The auto-vectorizer can be more effective if the compiler is given hints through compiler directives, but whether or not a loop is vectorized still depends on the compiler’s internal analysis and the information available to it. Requirements for auto-vectorization are discussed here. These decisions are typically based on incomplete information, since language standards have not provided a way to convey to the compiler all the information it needs to make more effective decisions or to convey programmer intent.

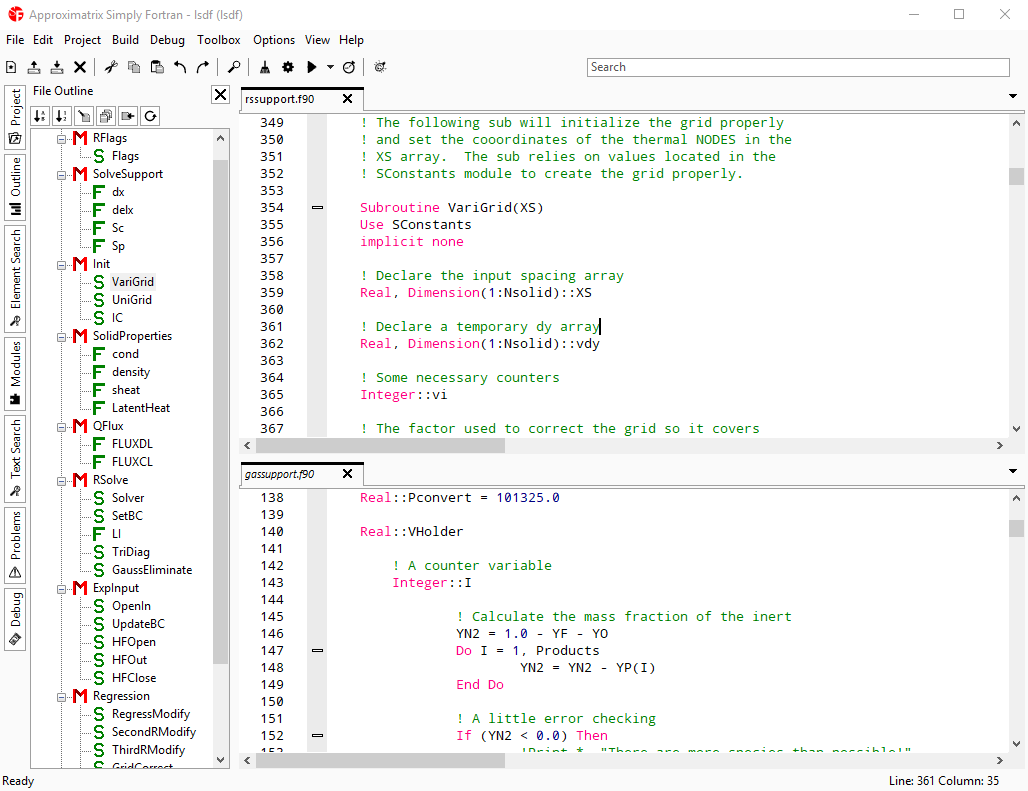

#Simply fortran no source detected code#

It must also estimate whether a code transformation is likely to yield faster or slower code. The compiler must be conservative, and not make any optimizations that could lead to different results from unoptimized code, even for unlikely-seeming values of input data. The SIMD intrinsic functions that are available to C and C++ developers do not have a corresponding Fortran interface, and, in any case, require a great deal of programming effort and introduce an undesirable architecture dependence.Īuto-vectorization has its limitations. Otherwise, the main way has been through auto-vectorizing compilers. If suitable optimized library functions are available, such as the ones in the Intel® Math Kernel Library, these may be a simple and effective way for Fortran developers to take advantage of SIMD parallelism on Intel Architecture.

This article will focus on SIMD parallelism multi-core parallelism is addressed elsewhere, with both OpenMP* and MPI being widely used in Fortran applications.

#Simply fortran no source detected full#

To get the full performance benefit from these improvements in processor technology, applications need to take advantage of both forms of parallelism. No longer does Moore’s Law result in higher frequencies and improved scalar application performance instead, higher transistor counts lead to increased parallelism, both through more cores and through wider SIMD registers.